The Intelligence Inflection Point: When Pipelines Become a Full-Time Job

What part of your pipeline wastes the most time?

1. Introduction: When Pipelines Become a Full-Time Job

You open a pull request.

The build fails.

The error references line 742 in a YAML file.

You scan indentation.

You check anchors and conditionals.

You compare with another repo’s workflow.

What should be automation often turns into manual troubleshooting.

Platforms like Jenkins, GitHub Actions, and GitLab CI/CD have made delivery faster. But as organizations scale, pipelines grow into layered systems—handling builds, containers, security scans, infrastructure provisioning, testing matrices, and environment promotion logic.

At some point, the pipeline itself becomes one of the most complex parts of the stack.

That is where AI is starting to reshape the workflow.

2. The Real Problem: Configuration at Scale

2.1 Pipeline Growth Outpaces Standardization

In many teams:

Each service has its own variation of CI logic

Copy-paste patterns accumulate

Conditional rules expand over time

Environment-specific exceptions multiply

The result is configuration drift across repositories.

Pipelines begin to reflect historical decisions rather than current architecture.

2.2 Debugging Becomes Forensic Work

When builds fail, engineers often:

Read thousands of lines of logs

Manually correlate commit changes

Search internal Slack threads

Compare with previous successful runs

This is reactive work.

It consumes senior engineering time.

3. What “Smart Pipelines” Actually Mean

The shift is not about new tooling. It is about new interaction patterns.

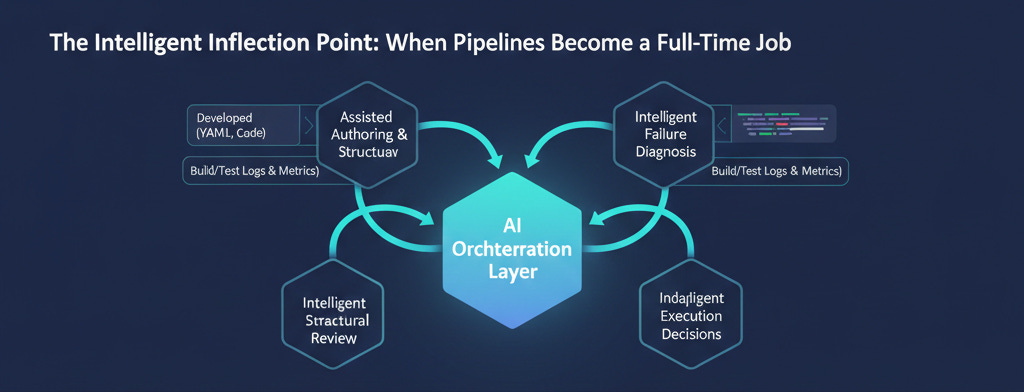

AI introduces assistance at four practical layers.

3.1 Assisted Authoring

Instead of building pipelines step by step:

Describe the project context

Generate a baseline workflow

Apply organization-wide standards automatically

This reduces boilerplate and enforces consistency from day one.

For growing teams, this is a governance advantage.

3.2 Structural Review and Optimization

AI systems can analyze pipeline definitions and identify:

Redundant jobs

Missing caching strategies

Inefficient parallelization

Security misconfigurations

This functions as continuous pipeline review, not just code review.

3.3 Intelligent Failure Diagnosis

When builds break, AI can:

Parse logs across stages

Correlate errors with recent changes

Identify likely root causes

Suggest focused remediation steps

This shortens investigation cycles and reduces context switching.

3.4 Data-Driven Execution Decisions

Over time, AI can learn patterns such as:

Frequently failing test suites

High-risk deployment windows

Services with unstable builds

Historical rollback triggers

Pipelines begin to respond to operational signals rather than static rules.

This is where CI/CD transitions from scripted automation to adaptive orchestration.

4. Impact on the DevOps Role

As pipeline intelligence increases, the DevOps focus shifts.

Less emphasis on:

Maintaining repetitive YAML blocks

Debugging syntax edge cases

Manually tuning each repository

More emphasis on:

Defining deployment policies

Setting guardrails for automation

Designing reusable workflow standards

Evaluating risk signals before release

The work becomes architectural and systemic rather than purely operational.

5. Practical Use Cases in Real Teams

5.1 Standardized Pipeline Templates Across 100+ Services

AI can analyze common patterns and generate consistent pipelines aligned with internal security and compliance requirements.

This reduces review overhead and onboarding time.

5.2 Test Selection Based on Code Changes

Instead of running full regression suites on every commit, AI can evaluate changed files and trigger only relevant tests.

Faster feedback. Lower compute cost.

5.3 Smarter Deployment Controls

Rather than static approval gates, deployment decisions can incorporate:

Historical failure data

Current system metrics

Risk scoring models

Rollouts become evidence-driven.

6. Constraints and Guardrails

AI-assisted pipelines require structure:

Version-controlled prompts or configuration rules

Clear policy boundaries

Auditable decision trails

Human validation for critical production changes

Intelligence without governance creates new operational risk.

7. Where This Is Heading

Pipelines are evolving into feedback systems.

Future workflows will likely include:

Continuous optimization of execution order

Automated rollback reasoning

Adaptive environment promotion strategies

Ongoing refactoring of pipeline logic based on usage patterns

The YAML file becomes an implementation detail, not the primary interface.

Conclusion: Designing the Next Generation of Delivery Systems

Pipeline complexity will continue to grow as systems scale.

Manual configuration management alone does not scale at the same rate.

AI introduces structured assistance across authoring, review, debugging, and optimization. The opportunity is to reduce repetitive configuration work and focus engineering effort on system design, reliability strategy, and policy definition.

For DevOps engineers, the next step is practical experimentation:

Identify repetitive pipeline patterns in your organization

Introduce AI-assisted generation in non-critical environments

Measure time saved in debugging and review cycles

Gradually expand usage under defined governance

CI/CD is not becoming less important. It is becoming more intelligent.

The teams that adapt early will spend less time maintaining pipelines—and more time improving how software moves from commit to production.